You probably already own an AI PC.

In the past few months, Intel and PC makers have beat the drum of the AI PC loudly and in concert with AMD, Intel, and Qualcomm. It’s no secret that “AI” is the new “metaverse,” and executives and investors alike want to use AI to boost sales and stock prices.

And it’s true that AI does depend on the NPUs found in chips like Intel’s Core Ultra, the brand that Intel is positioning as synonymous with on-chip AI. The same goes for AMD’s Ryzen 8000 series — which beat Intel to the desktop with an NPU — as well as Qualcomm’s Snapdragon X Elite.

It’s just that the integrated NPUs found within the Core Ultra right now (Meteor Lake, but with Lunar Lake waiting in the wings) do not play the outsized role in AI computation that they’re being positioned as. Instead, the more traditional roles for computational horsepower (the CPU and especially the GPU) contribute more to the task.

It’s important to note several things: First, actually benchmarking AI performance is something absolutely everyone is wrestling with. “AI” is comprised of rather divergent tasks: image generation, such as using Stable Diffusion; large-language models (LLMs), the chatbots popularized by the (cloud-based) Microsoft Copilot and Google Bard; and a host of application-specific enhancements, such as AI improvements in Adobe Premiere and Lightroom. Combine the numerous variables in LLMs alone (frameworks, models, and quantization, all of which affect how chatbots will run on a PC) and the pace at which these variables fluctuate, and it’s very difficult to pick a winner — and for more than a moment in time.

The next point, though, is one that we can say with some certainty: That benchmarking works best when you eliminate as many variables as possible. And that’s what we can do with one small piece of the puzzle: How much Intel’s CPU, GPU, and NPU contribute to AI calculations performed by Intel’s Core Ultra chip, Meteor Lake.

Mark Hachman / IDG

The NPU isn’t the engine of on-chip AI right now. The GPU is

We’re not trying to establish how well Meteor Lake performs in AI. But what we can do is perform a reality check on how much the NPU matters in AI.

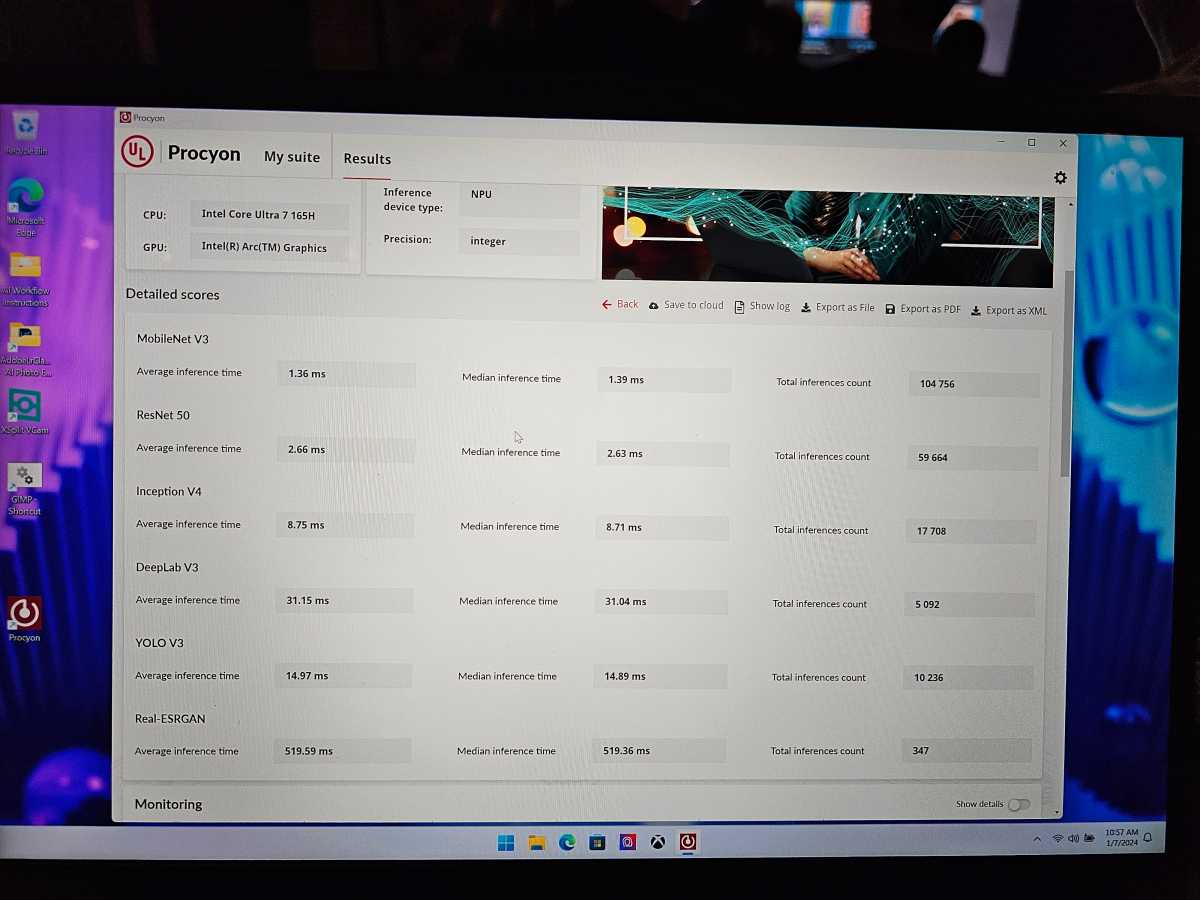

The specific test we’re using is UL’s Procyon AI inferencing benchmark, which calculates how effectively a processor runs when handling various large language models. Specifically, it allows the tester to break it down, comparing the CPU, GPU, and NPU.

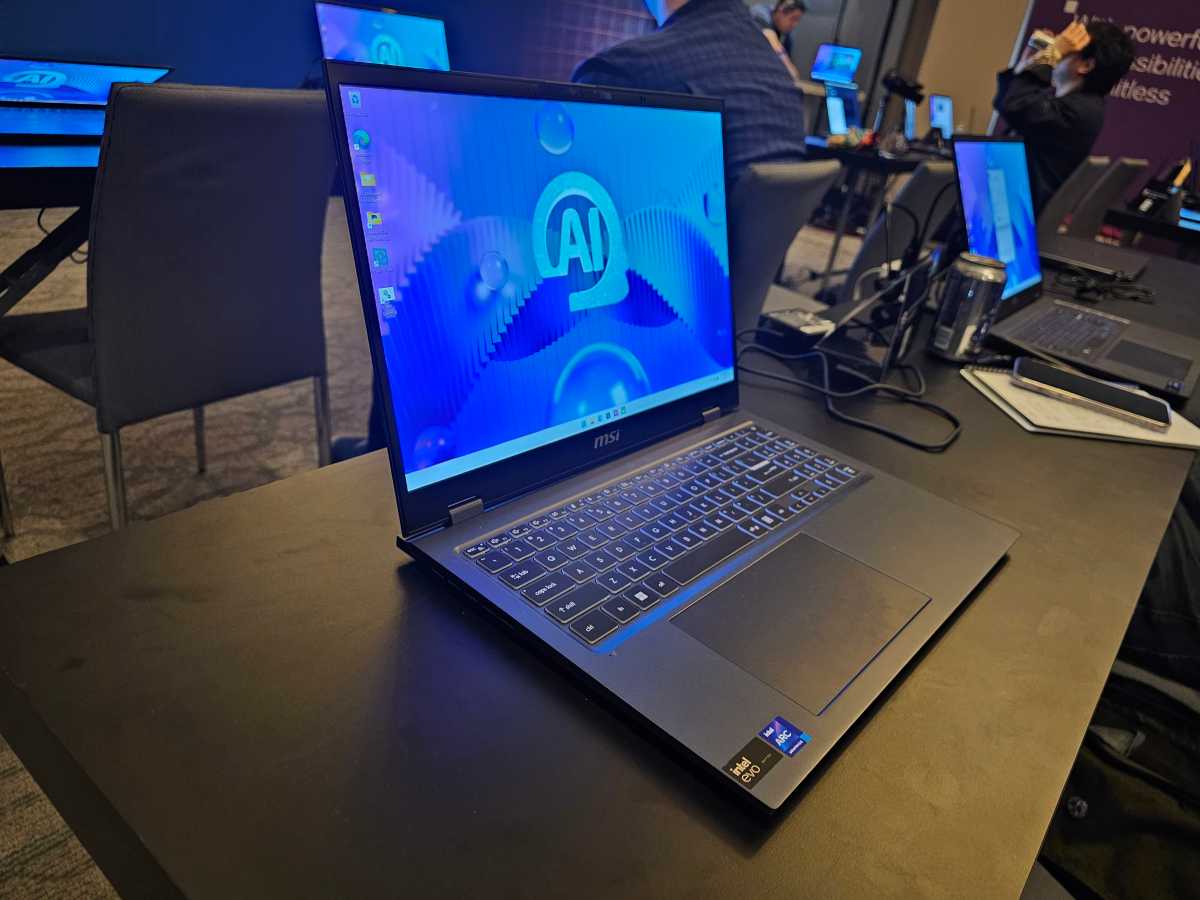

In this case, we tested the Core Ultra 7 165H inside an MSI laptop, provided for testing during an Intel benchmarking day at CES 2024. (Much of my time was spent interviewing Dan Rogers of Intel, but I was able to get some tests in.) Procyon runs the LLMs on top of the processor and calculates a score, based upon performance, latency, and so on.

Without ado, here are the numbers:

- Procyon (OpenVINO) NPU: 356

- Procyon (OpenVINO) GPU: 552

- Procyon (OpenVINO): CPU: 196

The Procyon tests proved several points: First, the NPU does make a difference; compared to the performance and efficiency cores elsewhere in the CPU, the NPU outperforms it by 82 percent, all by itself. But the GPU’s AI performance is 182 percent of the CPU, and outperforms the NPU by 55 percent.

Mark Hachman / IDG

Put another way: If you value AI, buy a large, beefy graphics card or GPU first.

But the second point is less obvious: Yes, you can run AI applications on a CPU or GPU, without any need for a dedicated AI logic block. All the Procyon tests demonstrate is that some blocks are more effective than others.

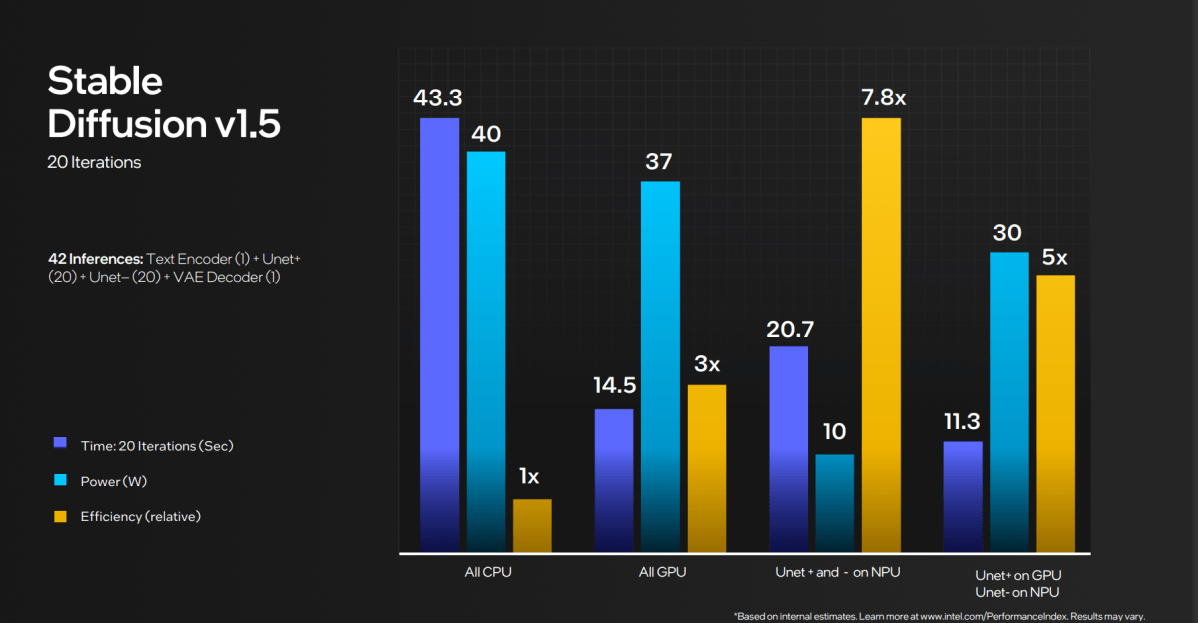

What Intel is saying (and, to be fair, has been saying) is that the NPU is more efficient. In the real world, “efficiency” is chipmaker code for “long battery life.” At the same time, Intel has tried to emphasize that the CPU, GPU, and NPU can work together.

Intel

In this case, the NPU’s efficiency equates to AI applications that operate over time, and probably on battery. And the best example of that is a lengthy Microsoft Teams call from the depths of a hotel room or conference center (just like CES!) where AI is being used to filter out noise and background activity.

Typically, AI art applications like Stable Diffusion launched first as a way to generate local AI art using the power of your GPU, alongside a ton of available VRAM. But over time AI applications have evolved to run on less powerful configurations, including mostly on the CPU. This is a familiar metaphor; you’re not going to run a graphics-intensive game like Crysis well on integrated hardware, but it should run — just very, very slowly. AI LLMs / chatbots will do the same, “thinking” for a long time about their responses and then “typing” them out very slowly. LLMs that can run on a GPU will perform better, and cloud-based solutions will be much faster.

However, AI will evolve

It’s interesting, too, that (as of this writing) UL’s Procyon app recognizes the CPU and the GPU in the AMD Ryzen AI-powered Ryzen 7040, but not the NPU. We’re in the very early days of AI, when not even the basic capabilities of the chips themselves are recognized by the applications that are designed to use them. This just complicates testing even further.

The point is, however, that you don’t need an NPU to run AI on your PC, especially if you already have a gaming laptop or desktop. NPUs from AMD, Intel, and Qualcomm will be nice to have, but they’re not must-haves, either.

Mark Hachman / IDG

It won’t always be this way, though. Intel’s promising that the NPU in the upcoming Lunar Lake chip due at the end of this year will have three times the NPU performance. It’s not saying anything about the CPU or the GPU performance. It’s very possible that, over time, the NPU’s performance in various PC chips will grow so that their AI performance will become massively disproportionate compared to the other parts of the chip. And if not, a slew of AI accelerator chip startups have plans to become the 3Dfx’s of the AI world.

For now, though, we’re here to take a deep breath as 2024 begins. New AI PCs matter, as do the new NPUs. But consumers probably won’t care as much as chipmakers that AI is running on their PC, versus the cloud, no matter how loud the hype is. And for those that do care, the NPU is just one piece of the overall solution.